Ofri Margalit

Based in Tel Aviv · Available Worldwide

Based in Tel Aviv · Available Worldwide

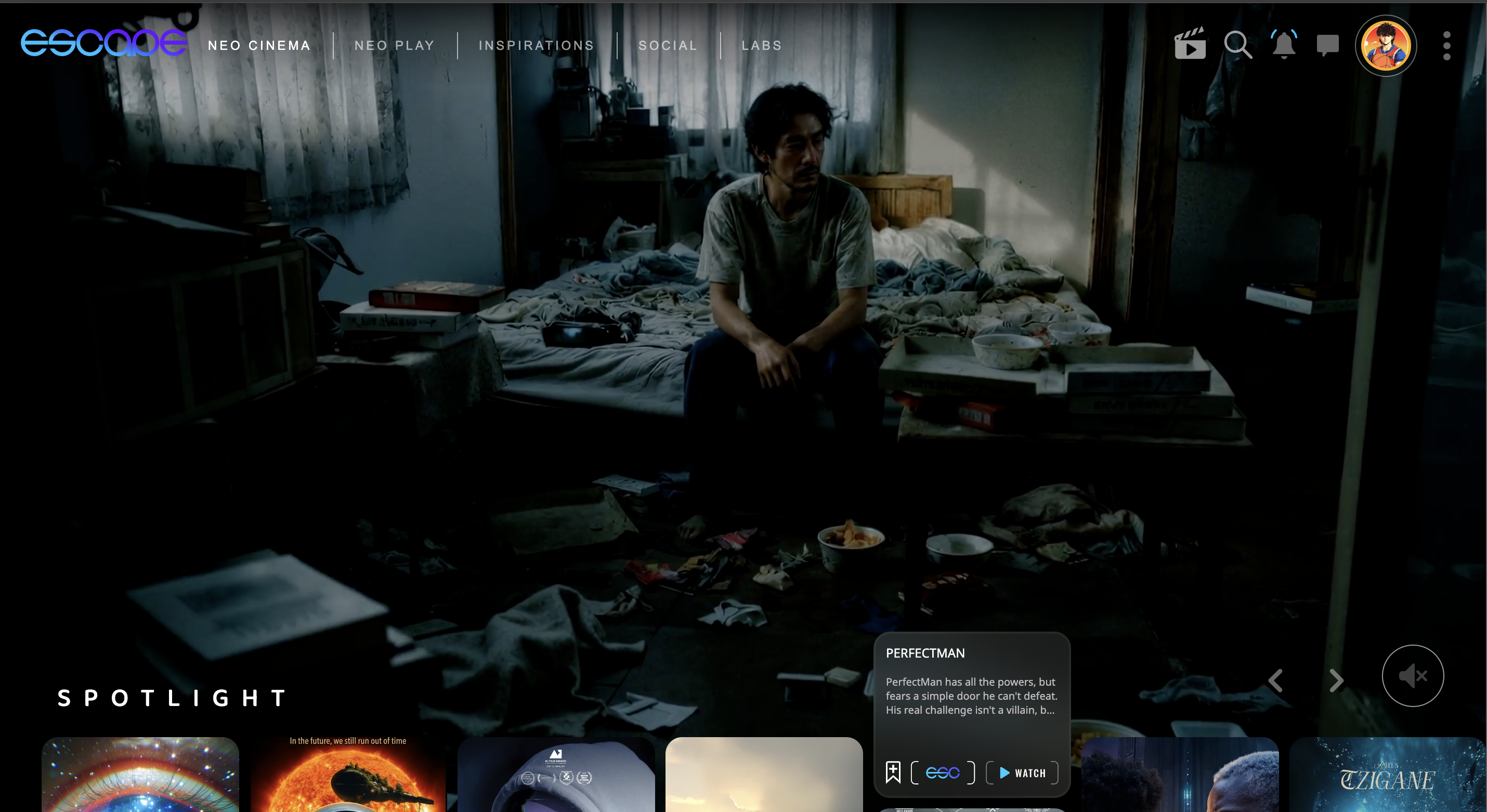

We wanted to tell a story about a hikikomori - a Japanese term for people who withdraw completely from society, isolating themselves inside their homes for months or even years. We first encountered the phenomenon through Shaking Tokyo, a short film by Bong Joon-ho that stayed with us for years.

The creative challenge was clear: how do you build a Japanese-set narrative that feels culturally grounded, cinematically cohesive, and emotionally real?

The technical challenge was bigger. Generative video models were still maturing fast, and the landscape was shifting week by week. Character consistency across shots was unreliable. Some outputs had artifacts, frame drops, or resolution issues. We needed to build a pipeline that could deliver cinema-grade output despite those limitations.

Before generating a single frame, we sat down and wrote a full screenplay - the same kind we'd write for any narrative film. Once the story was locked, we broke it down into a shooting script with scene breakdowns, shot descriptions, and visual intent. We wanted a clear roadmap before touching the tools.

What made this different from traditional filmmaking was the flexibility that came after. On a live-action set, once you wrap, you're locked into what you shot. Here, we could generate new shots mid-edit. If a sequence needed a missing beat, an extra angle, or a tonal correction, we could go back and create it without rescheduling a production day. The script gave us structure. The tools let us keep refining inside it.

We built a multi-stage generative pipeline where each tool had a specific, intentional role. We started in ChatGPT, generating initial frames of our protagonist to explore his look and build a rough character sheet. From there, we moved to Midjourney, which became our main engine for scene stills and carried most of the consistency work - particularly clothing, overall look, and facial continuity. For scenes where exact facial accuracy mattered, we passed stills into Ideogram for character face-swapping and upscaling. From there, image-to-video via Kling 2.6 and Veo 3. Voice-over was written in Hebrew, translated to Japanese via ChatGPT, then generated in ElevenLabs. Final picture was cut and color-graded in DaVinci Resolve.

Prompting was its own craft inside the pipeline. We used ChatGPT as a starting point for prompt drafts, then refined them against what the models actually produced. Every model had its own quirks - learning how each one responded to different phrasings became part of the work.

The hardest technical problem was maintaining visual fidelity across the pipeline. Each tool handoff risked quality loss, and some Kling outputs had minor stuttering or frame gaps. We solved it through Topaz Video AI - specifically frame interpolation to smooth out broken motion, and targeted video upscaling to bring everything up to a cinematic resolution.

But the deeper challenge wasn't technical. It was keeping the story human. We approached PerfectMan the way we'd approach any narrative short: visual language first, emotion first, cinematic grammar first. Both of us come from live-action filmmaking, and we brought that same discipline here. Shot design, pacing, color decisions, sound layering.

What we learned - and what the final sequence of the film proves - is that the tools don't carry the emotional weight. The cinematic decisions do. AI expanded what was possible. It didn't replace what mattered.

Out of ~400 films submitted.

Clip from the official Dor Awards judges' session. Watch the full session here →

Selected as the Best Storytelling of 2025 by AI LIVE, a leading AI filmmaking community on LinkedIn (247K followers). Previously known as Creativize.ai.

Academy Award winner Roger Avary (Pulp Fiction) selected PerfectMan as his personal favorite among the Dor Awards finalists, as featured in the judges' reaction video.

Academy Award winner John Gaeta (The Matrix) selected PerfectMan for Brave New Stories - an online AI film festival on his platform Escape.ai, dedicated to sci-fi and fantasy storytelling.

The campaign takes direct inspiration from the iconic Israel Is Drying Up public awareness campaign - a visual reference instantly recognizable to Israeli viewers. The name "Israel Is Getting Addicted" deliberately echoes it.

The project required a consistent character that could carry the message across the full length of the piece, with minimal resources and fast turnaround.

I started by designing the character in Nano Banana Pro, generating around 20 reference images of the same figure from different angles, all on a clean white background. I built these using a structured prompt template on Higgsfield, where each prompt returned the same character from a new angle. Once I had the reference set, I loaded the images into Higgsfield's Soul ID feature to lock in a consistent character identity that I could reuse freely throughout the rest of the pipeline.

For video, I used Kling 3.0 for image-to-video generation. For lip-syncing and upscaling, I used HeyGen. The voice-over was recorded by the client. The raw audio quality needed work, so I processed it through AI audio tools and cleaned it further in DaVinci Resolve. Final edit and color grading were done in DaVinci Resolve.

Two short pieces from the same trip. Different countries, different light — shot as a thematic pair.

Three children on a quiet mountain road in northern Vietnam. An older sister watching her younger brothers, left in her care while the landscape stretched endlessly behind them.

Shot on BMPCC 6K · 4:3, film aesthetic · Graded in DaVinci Resolve.

A single tracking shot from a winter evening in Hokkaido. Voice-over generated in ElevenLabs.

Shot on BMPCC 6K · 4:3, film aesthetic · Graded in DaVinci Resolve.

Narrative and visual work for music — directed, shot, and graded on location.

Bo Elay — “Heichal”

Directed, shot, edited, and color-graded by Ofri Margalit.

Interia — “Make Believe Heaven”

Directed by Omer Eventov. Cinematography and color grading by Ofri Margalit.

Visual craft built through film sets, sharpened by code.

Ofri Margalit is a narrative filmmaker and AI creative based in Tel Aviv, currently pursuing a B.Sc. in Computer Science.

His work sits at the intersection of two disciplines that rarely meet: years of experience in professional film productions, including Netflix series, feature films, and prime-time TV, alongside hands-on generative AI filmmaking.

His AI short film PerfectMan won 1st place at the Dor Awards and was recognized by Academy Award winners Roger Avary and John Gaeta.

After years on professional film sets, I've watched directors, DPs, and producers spend months in pre-production trying to answer one question: what will this actually look like? Storyboards help, but they're static. Mood boards help, but they're not the film.

It's a gap I keep coming back to. An idea I've been shaping: a pre-production platform where filmmakers could upload their script, define their visual language through prompts and style presets, and receive cinematic image sequences that function as realistic storyboards. Selected sequences could then be animated into short motion previews, letting teams build a proof of concept for their vision before a single day of shooting.

Generative AI has made pre-visualization faster than it's ever been. The opportunity is to make it work for the people who actually need it, filmmakers, with an interface that speaks their language.

For now it's an observation and a direction, shaped by the problem I keep seeing from both sides of the camera.